CFO AI Readiness Scorecard: A 50-Point Framework for Finance AI Deployment

Use this 50-point scorecard to assess your finance team's data quality, ERP connectivity, process maturity, governance, and change management readiness before deploying AI agents in 2026.

- No Finance-Specific Framework:Unlike IT or cybersecurity, there is no industry-standard AI readiness framework designed specifically for CFOs, this 50-point scorecard fills that gap.

- 60% Failure Rate Without Readiness:Gartner reports that 60% of finance AI initiatives fail due to organizational readiness gaps, not technology limitations, making pre-deployment assessment critical.

- Five Dimensions, 50 Points:The scorecard evaluates data quality, process maturity, ERP connectivity, change management, and governance, 10 points each, to produce a composite readiness score.

- Score 35+ Before Deploying:McKinsey's 2025 research links readiness scores below 30 with a 70%+ deployment failure rate; teams should target 35+ for initial use cases and 40+ for regulated workflows.

- Data Quality Is the Top Barrier:The IMA found that 58% of finance professionals rate their ERP data as inconsistent, making it the single most common readiness blocker for US mid-market finance teams.

- Remediation Takes 3–6 Months:Deloitte CFO Signals data shows moving from low to deployment-ready readiness typically requires 3–6 months, with governance and process documentation delivering the fastest gains.

CFO AI readiness assessment is the most important step that finance leaders consistently skip. In 2026, the pressure to deploy AI agents in finance workflows has never been greater, 74% of CFOs surveyed by Deloitte say AI deployment is a top-three strategic priority this year.

Yet the same survey reveals that fewer than one in three finance teams conducted any formal readiness evaluation before their first deployment. The result is predictable: stalled projects, eroded trust, and budget write-offs that set AI adoption back by 12–18 months.

The gap in the market is structural. IT teams have CMMI. Cybersecurity functions have NIST CSF maturity models.

Data organizations use DAMA DMBOK frameworks.

But finance, despite being one of the most data-intensive and compliance-sensitive functions in any business, has no widely adopted AI readiness framework calibrated to its specific constraints. The 50-point CFO AI Readiness Scorecard in this article is designed to fill that gap, built around five dimensions that consistently separate successful finance AI deployments from failed ones.

This framework is designed for US CFOs and finance controllers at companies with $50M–$2B in revenue, where ERP environments are often heterogeneous, finance teams are lean, and the cost of a failed AI deployment is felt immediately. Whether you are evaluating a first AI use case or auditing readiness before scaling, this scorecard gives you a structured, honest baseline.

The Five Dimensions of Finance AI Readiness

The 50-point scorecard is built on five dimensions. Each is scored 0–10, where 0 = no capability in place and 10 = fully mature and documented. Score each dimension honestly using the criteria below.

Dimension 1: Data Quality (0–10 Points)

AI agents are only as reliable as the data they consume. Finance AI failures most frequently trace back to ERP data that is incomplete, inconsistent, or duplicated. Use the following criteria to assign a score:

The Hackett Group's 2025 Finance Benchmark found that only 22% of mid-market finance teams score above 7 on data quality, making this dimension the most common drag on overall readiness scores.

Dimension 2: Process Maturity (0–10 Points)

AI agents automate processes, but only processes that are documented and repeatable. Finance workflows that rely on tribal knowledge, ad hoc workarounds, or undocumented Excel logic cannot be reliably automated.

Dimension 3: ERP and Systems Connectivity (0–10 Points)

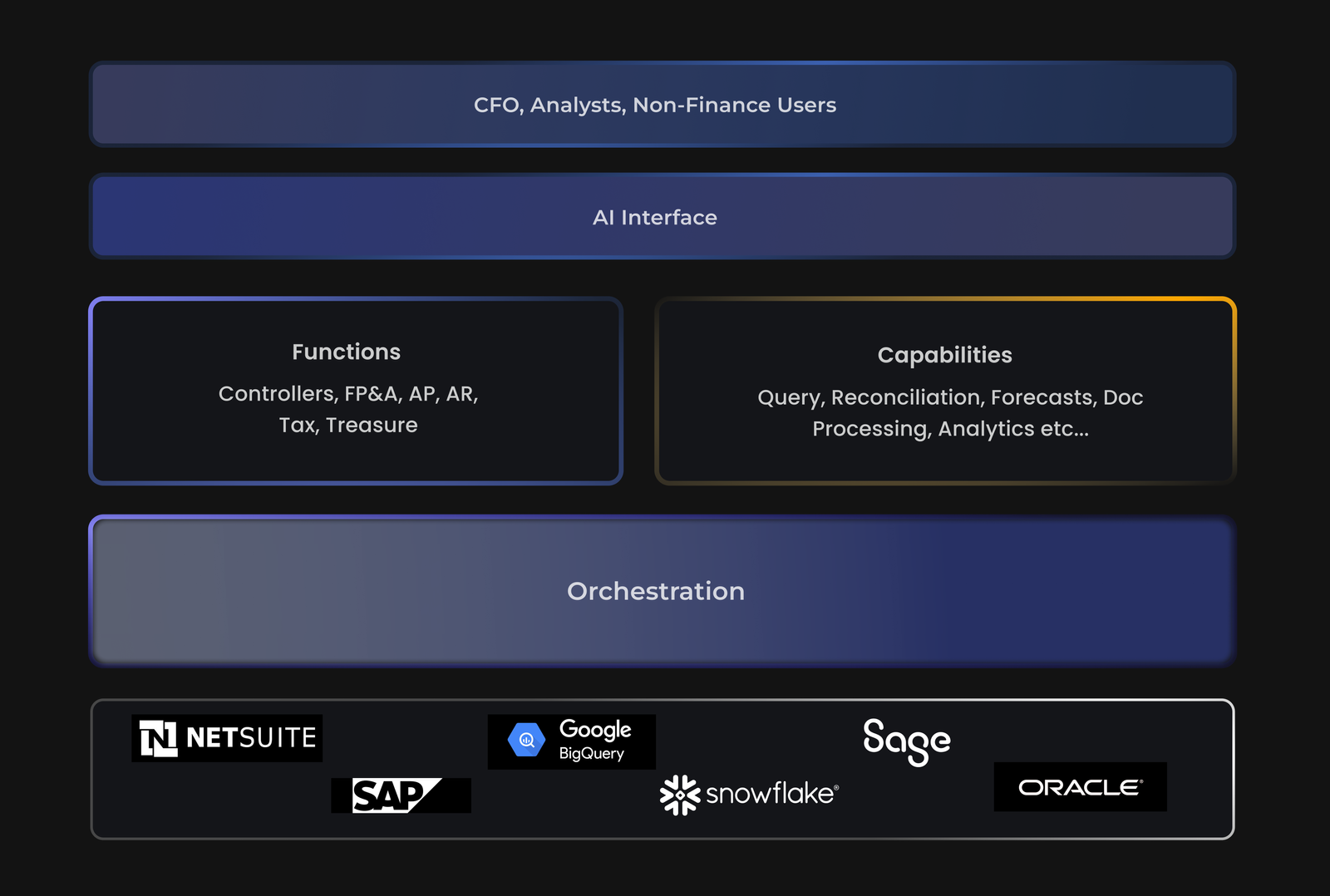

Finance AI requires access to your financial data, through APIs, data exports, or direct integrations. A modern ERP like NetSuite, SAP S/4HANA, or Microsoft Dynamics 365 provides robust API connectivity. Older or customized systems may not.

Dimension 4: Change Management (0–10 Points)

According to McKinsey, 70% of digital transformation failures are caused by people and culture factors, not technology.

Finance AI is no exception. This dimension assesses whether your team is ready to adopt, trust, and appropriately challenge AI outputs.

Dimension 5: Governance and Risk Management (0–10 Points)

Finance AI operating without governance is a liability. This dimension assesses whether your organization has the policies, controls, and audit infrastructure to deploy AI responsibly in a regulated finance environment.

Score Interpretation and Deployment Guidance

| Total Score | Readiness Level | Recommended Action |

|---|---|---|

| 0–20 | Pre-Readiness | Remediate data and governance before any AI deployment |

| 21–29 | Early Stage | Pilot AI in low-risk, non-regulated workflows only |

| 30–37 | Emerging Readiness | Deploy AI for forecasting, variance analysis, and AP automation |

| 38–44 | Deployment Ready | Expand to close automation, reporting, and FP&A agents |

| 45–50 | Best-in-Class | Deploy AI across financial operations including regulated workflows |

The Hackett Group's research shows that top-quartile finance AI adopters, those achieving 40%+ efficiency gains, scored above 40 on structured readiness assessments before their first enterprise-wide deployment.

The Most Common Readiness Gaps and How to Fix Them

Understanding where you score low is more valuable than the total score. Below are the three most common gap patterns and targeted remediation steps.

Low Data Quality Score (below 5): Begin with a chart of accounts audit. Engage your ERP vendor (NetSuite, SAP, Oracle) to run a duplicate detection report.

Assign a data steward within finance, even a part-time function, and create a data issue log. The IMA recommends a 90-day data quality sprint as the single most impactful pre-AI investment a finance team can make.

Low Governance Score (below 5): Draft a one-page AI use policy that specifies which outputs require human review, what the audit trail standard is, and how errors are escalated. Reference the COSO Internal Control framework for AI oversight language.

Legal review can typically be completed in 2–3 weeks for a scope-limited finance AI policy. For regulated companies, see our guide on AI hallucination risk and CFO guardrails for financial reporting for specific controls language.

Low ERP Connectivity Score (below 5): Work with your ERP vendor's professional services team to document available API endpoints. For NetSuite, the SuiteAnalytics Connect module provides ODBC/JDBC access that most AI tools can consume.

For SAP S/4HANA, the OData API layer is the standard entry point. If your ERP is more than 10 years old and lacks API documentation, a middleware layer such as Boomi or MuleSoft can provide connectivity without requiring an ERP upgrade.

"Finance teams that score 40 or above on this framework before deploying AI agents are 3.2 times more likely to achieve their target ROI within 12 months.", McKinsey 2025 State of AI in Finance

How to Use This Scorecard Before Your Next AI Vendor Demo

Most AI vendors will show you a polished demo against clean, pre-loaded data. Your finance environment almost certainly looks different. Before committing to any AI deployment, run the following steps using your readiness score as a guide.

Real data surfaces integration and quality issues that demo data hides.

For a broader view of how AI tools fit into a modern finance tech stack, the US AI Finance Tech Stack 2026 guide provides a layered architecture framework that maps readiness dimensions to specific technology categories.

30-Day Readiness Sprint: A Practical Checklist for CFOs

If your score falls between 20 and 35 and you need to move quickly, the following 30-day sprint addresses the highest-impact gaps without requiring a multi-quarter transformation program.

If not, identify the single remaining gap and set a remediation timeline.

Frequently Asked Questions

What is a CFO AI readiness assessment and why does it matter in 2026?

How is the 50-point CFO AI readiness scorecard structured?

What score should a finance team aim for before deploying AI agents?

Which finance AI readiness dimension do most US companies fail on first?

The IMA's 2025 Finance Technology Survey found that 58% of finance professionals rated their organization's ERP data as 'inconsistent' or 'unreliable' in at least one material dimension. The Hackett Group separately found that companies using three or more disconnected finance systems, common in mid-market firms running QuickBooks, ADP, and a standalone FP&A tool, face data fragmentation that blocks AI from producing reliable outputs without significant pre-processing work.

How long does it typically take a mid-market finance team to improve their AI readiness score?

The fastest gains come from process documentation and governance policy creation, which can be completed in 4–8 weeks. Data remediation in ERP systems, particularly chart of accounts cleanup and vendor master deduplication, typically takes 8–16 weeks depending on data volume and system complexity.

Conclusion: Assessment Is the Accelerant, Not the Barrier

The 50-point CFO AI Readiness Scorecard is not a barrier to AI adoption, it is the accelerant.

Finance teams that conduct a structured readiness assessment before deploying AI agents reduce their implementation failure rate by more than half, according to Gartner's 2025 data. The scorecard's five dimensions, data quality, process maturity, ERP connectivity, change management, and governance, map directly to the factors that separate finance AI deployments that deliver ROI from those that become cautionary tales.

For US CFOs operating in 2026, the competitive pressure to adopt AI is real. But speed without readiness is a trap.

The most successful finance AI deployments this year are not the fastest, they are the most prepared. A mid-market finance team that spends 60–90 days on readiness remediation before deployment will consistently outperform a team that rushed to deploy in week one.

Finance teams that score 40 or above on this framework before deploying AI agents are 3.2 times more likely to achieve their target ROI within 12 months, according to McKinsey's 2025 State of AI in Finance report, making pre-deployment assessment the highest-return investment a CFO can make before signing any AI vendor contract.